PaSQL - Prompt as SQL: Why I Built a Compiler for AI Requests

It Started With a Frustration in November

Back in November 2025, I was deep in building out my VMS MCP server, a C# .NET system with dozens of tools connecting Claude to real business data. Subscriber analytics, revenue reports, churn analysis, campaign performance. The architecture was solid. The tools worked.

But there was a pattern I kept seeing that bothered me.

My end users, analysts, operations managers, business stakeholders, would ask Claude things like:

"Give me a churn report for Q2, you know, the subscribers, exclude the trials, sort it somehow, keep it short."

And Claude would do its best. Sometimes great. Sometimes not. The outputs drifted depending on how the question was phrased. The same question asked two different ways could produce two completely different analyses. There was no structure. No discipline. No way to govern what was being asked before it reached the model.

I kept thinking: these are data people. They know how to think in structured terms. They write SQL every day. They understand WHERE clauses and ORDER BY and LIMIT. Why are we asking them to abandon all of that discipline the moment they talk to an AI?

The idea that formed was simple: what if AI prompts could be structured like SQL?

What if, instead of writing a paragraph and hoping for the best, you declared exactly what you wanted, the way SQL forces you to declare exactly what data you need?

The Oldest Language Still Teaching Us Things

SQL turned 50 years old recently. It was designed in the early 1970s at IBM, and it solved a problem that sounds remarkably familiar: how do you give non-programmers a structured, governed, auditable way to query complex data?

The answer was clauses. Specific, ordered, purposeful:

SELECT - what do you want?

FROM - where does it live?

WHERE - what conditions apply?

ORDER BY - what matters most?

LIMIT - how much do you need?

Every SQL query forces the person writing it to think through the request before executing it. You can't write a vague SQL query. The database either parses it or it doesn't. The structure enforces clarity.

AI prompts have no such discipline. They're freeform, unvalidated, unauditable. The same prompt written differently costs different amounts of tokens, produces different outputs, and leaves no trace of what was actually asked.

The what if question I kept coming back to was: what if we applied SQL's discipline to AI requests?

Then Tokens Got More Expensive

I sat on the idea through November and into early 2026. Building other things. Expanding the MCP server. Working on the stablecoin payment integration. Life moved fast.

Then came a Claude update that changed the token economics. I was already tracking token costs carefully, in February I had a conversation about how token bloat is one of the leading causes of agent failures in production systems. The research was stark: in a conventional MCP setup with many tools, the client serializes hundreds of tool definitions into every request. Those schemas aren't token-efficient. One agent cascade burning 10 million tokens in a failed loop can wipe out months of cost savings from cheaper per-token pricing.

That conversation crystallized something for me. The problem wasn't just prompt quality. It was prompt efficiency. Unstructured prompts waste tokens because the model has to spend tokens interpreting intent, selecting tools, deciding output format, all things that could be declared upfront…btw this of efficient prompting is not new. I’ve read several articles over the years where formats like JSON and others were proposed.

That's when I finally got around to building PaSQL.

What PaSQL Is

PaSQL stands for Prompt as SQL. It's a structured prompt framework that uses SQL-like clauses to compose AI requests.

Instead of writing:

"Give me a churn analysis for Jennifer Craiglist subscribers in Q2, exclude trials, sort by worst performing, keep it concise"

You write:

SELECT churn rate by product tier FROM Jennyifer Craiglist subscriber data WHERE quarter = Q2 2025 AND exclude trial accounts AND flag segments above 15% churn ORDER BY highest churn first LIMIT executive summary + 3 key findings

Same intent. Completely different discipline.

You see, every clause has a purpose:

| Clause | Purpose |

|---|---|

| SELECT | What you want - the output |

| FROM | The data source or domain |

| WHERE | The primary condition or question |

| AND | Constraints, filters, exclusions |

| ORDER BY | What to prioritize |

| LIMIT | Output format and length |

The structure forces you to think through the request before submitting it. Missing a LIMIT? The compiler tells you. Using a dangerous verb in SELECT? Blocked by policy. Referencing an unauthorized data source in FROM? Rejected before it touches the model.

The Compiler

PaSQL isn't just a naming convention. It's a full C# compiler built on .NET. (the best enterprise language on the market for RAD)

The pipeline:

PaSQL text

→ Parser (tokenizes clauses, handles continuations)

→ AST (strongly-typed intermediate representation)

→ Diagnostics (errors and warnings with line/column)

→ Policy Layer (structural governance before LLM execution)

→ Renderer (AST → natural language prompt)

→ Output

The parser handles multi-line clause values, continuation lines, ORDER BY as a two-token keyword, comments, and size limits. It's not a regex, it's a proper lexer.

The AST is a strongly-typed PaSqlAst object:

public sealed class PaSqlAst

{

public string? Select { get; init; }

public string? From { get; init; }

public List<string> Where { get; init; }

public List<string> And { get; init; }

public string? OrderBy { get; init; }

public string? Limit { get; init; }

}

The policy layer runs structural checks before the prompt ever reaches the LLM:

var pipeline = new PolicyPipeline()

.Add(new RequireLimitPolicy())

.Add(new SelectVerbSafelistPolicy())

.Add(new FromAllowListPolicy(approvedSources))

.Add(new MaxAndClausesPolicy(10));

You can block dangerous verbs, enforce required clauses, restrict data sources, and cap query complexity, all before a single token hits the model.

The renderer auto-detects query complexity and switches between two styles:

Flowing for simple queries (1-3 clauses), produces a single clean sentence

Structured for complex analyst queries (4+ AND clauses), produces labeled sections with bullet points

The ORM wraps the compiler in an EF Core-style fluent API:

var compiled = PromptDb.Default.Query()

.Select("churn risk analysis")

.From("Nutrientsystem subscriber data")

.Where("identify high risk cancellations within 30 days")

.And("exclude trial and corporate accounts")

.And("flag segments above 15% churn")

.OrderBy("revenue at risk descending")

.Limit("executive dashboard: summary table + 3 insights")

.Compile();

var prompt = compiled.ToPrompt(); //LLM

var audit = compiled.ToJson(); //audit log

Why the AST Changes Everything

Most people think of PaSQL as a formatting convention. The moment you introduce a compiled AST, it becomes something entirely different.

The AST is a structured intermediate representation you can inspect and enforce policy against before the prompt hits the LLM. That's not a prompt pattern, that's prompt infrastructure.

Every compiled query produces a JSON audit log:

{

"select": "churn rate by product tier",

"from": "Jennifer Craiglist subscriber data",

"where": ["quarter = Q2 2025"],

"and": ["exclude trial accounts", "flag segments above 15% churn"],

"orderBy": "highest churn first",

"limit": "executive summary + 3 key findings"

}

That log is diffable. Finger-printable. Version-controllable. You can SHA256 hash it for tamper-evident audit trails if you fancy. You can compare prompt versions across deployments. You can prove what was asked and when…and by whom.

That's enterprise-grade behavioral requirements.

The IDE

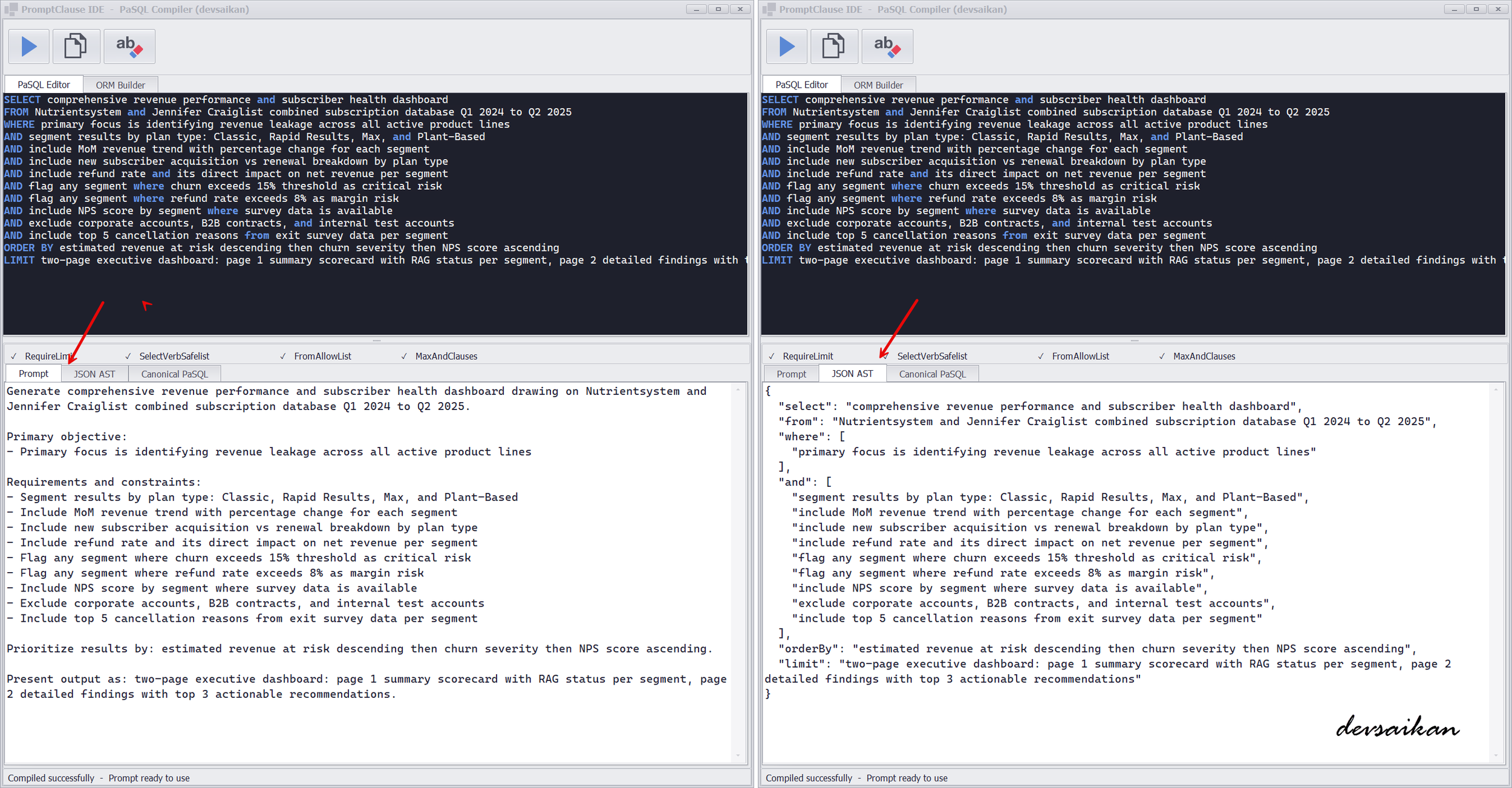

To make PaSQL accessible to non-developers, I built a WinForms IDE with two input modes:

PaSQL Editor - a dark-themed code editor with syntax highlighting. Keywords light up in blue as you type. Write your query, click GO, get your compiled prompt.

ORM Builder - a form-based interface. Fill in labeled fields (SELECT, FROM, WHERE, AND, ORDER BY, LIMIT), click GO, get the same compiled prompt. No syntax knowledge required.

Both modes produce identical output: a rendered natural language prompt, a JSON AST for audit logging, and a canonical PaSQL representation for version control.

PromptClause IDE - by Saif Khan

What's Next

PaSQL is now integrated into my VMS MCP server as a live tool. When a user prefixes their request with USE PASQL, the entire governed pipeline fires, compile, validate, policy check, log, render, execute.

Coming next:

Model routing via a MODEL clause

Agent-to-agent messaging using compiled ASTs

GitHub release

The compiler is built. The ORM is tested. The MCP integration is live (see my LinkedIn for videos on MCP).

SQL gave databases structure and governance 50 years ago. PaSQL brings that same discipline to AI.